- Home

- Services

- About

- News

- Contact

- Autotune for pro tools free download

- Install lexmark z645 printer without cd

- Download game 7 sins ppsspp

- Wireless router for mac laptop

- Mqtt docker tutorial

- How to update your mac to 10-3

- Frontier internet speed test

- Sell your mac back to apple

- Daz studio genesis 2 morphs

- Star wars episode i the phantom menace comic

- V50 vhf radio nmea 2000 network

- Microsoft business contact manager standalone

- Watch poison ivy 2 online megavideo

- Vectorworks viewer 2018

- Zebronics g41 motherboard driver download

- J cole born sinner album all songs mp3 download

- How to download the witcher 3 from disk

- Easy postgresql client windows

- Alan dean foster books online free

- Lync skype for business download free

- English to english dictionary free

- Wireless ps3 controller on mac

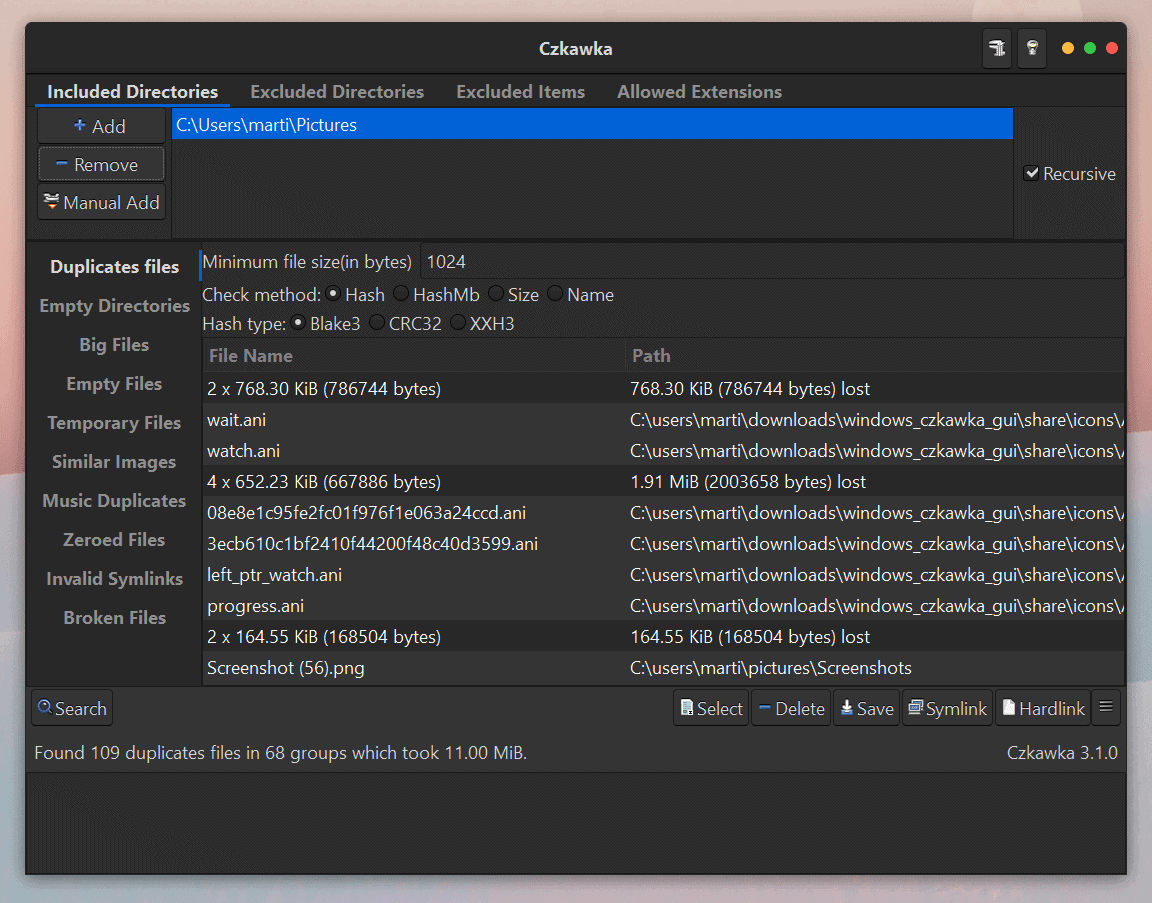

- Hash based file deduplication software

- Snap on modis ultra auction

- Uninstall hangouts on mac

- Khaad bengali movie download

- Iphone choose safari or chrome to open

- Microsoft teredo tunneling adapter driver problem windows 7

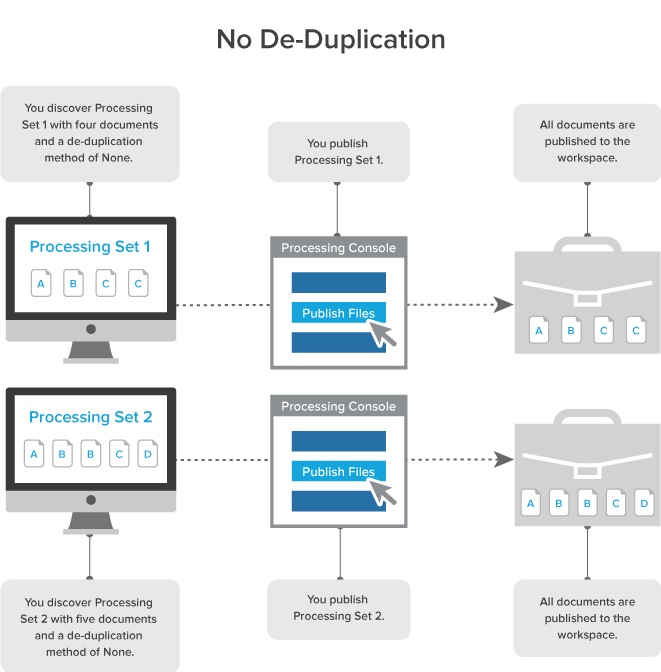

No matter how reliable and predictable we design a system to be, there is always a probability of failure. Yet, as it turns out, that is all anyone can do.Įverything is a probability. We would never intentionally design a system that “probably” works. When we design data systems, we design for reliability and we design for predictability. Conceptually, old school data guys (like myself, admittedly), don't think in probability. Here's where we get into the philosophical aspect of this. Yes, it is conceded that block deduplication using a hash algorithm has a chance, no matter how small, that there will be an error in the data, but it “probably” won't. Like the old school physicists, the old school data theory is just as wrong. The quantum mechanical physicists came to realize that uncertainty is part of the universe. The old school physicists believed that the universe would eventually prove to be 100% predictable. This leads us to the quote “God does not play dice.” Well, here we need to make a few mental leaps. Again, there are better treatments of this elsewhere and you are encouraged to look. The model is directly relatable to the statistical block level deduplication. It is paradoxical because the chance of a set of 23 people, with no twins, has a 50% chance of having two people with the same birthday seems absurd. One of the more interesting reads is the “birthday paradox.” (Look it up on google) The “birthday paradox” is a statistical treatment of the likelihood that in any random set of people that two would have the same birthday. There is a lot of math to verify or disprove this, of course. If we assume a finite and limited amount of source data, then we can reasonably conclude that it is very unlikely that any two differing blocks encountered on a system will produce the same hash. So, if we assume the SHA-2 hash is as random as it claims to be, and there is good proof it is fairly good.

That means that there are 2^256 possible hash values. A SHA-2 256 hash is a 32 byte pseudo-random number. To fully understand this, lets discuss some numbers. These very qualities lend it well do the problem of deduplication. SHA is “cryptographic” because it is difficult or impossible to reverse the hash and generate source data and it is unlikely that any two data sources will produce the same hash value. This essay won't go into to detail about hashing, because if you know it, it is redundant, if you don't know what hasing is you could find a better description on Wikipedia. Secure Hash Algorithm, or SHA, is a cryptographic “hash.” A hash is a smaller numerical representation of a much larger piece of data.

Hash based file deduplication software series#

If you could create a pseudo random number with a “high probability” of uniqueness that represented a block of data, then maybe it could be done.Įnter the SHA-2 series of hashing algorithms. What if you could reasonably represent 16K of data with 32 bytes of data? Obviously, from a 1:1 numerical point of view, it can't be done, but it can be done on a probabilistic basis. This is where, I believe, the quantum mechanical view of things is shaking up the old data theory. Thus, any attempt to try to remove duplicate blocks invariably would fail or be so specialized having a priori knowledge of the data as not to be practical. The problem is that you can't 100% guarantee all the possible contents of a block with any less data than the block can contain.

The amount of additional data required to represent duplicate blocks would be bigger than any sort of savings you could get. That block could be ANYTHING within the size. Why? Well, if your data block contained 16K (16384 bytes), then you really couldn't represent that much data in anything less than 16384 bytes. In old fashioned data theory, block level deduplication was not practical. Then, as blocks are found to be duplicative, only a single block is stored with the other identical blocks referencing the first one. This is a process by which computer files are analyzed for blocks, (fixed length quantities of data) that are the same as other blocks within the file or in other files. One of the more interesting things to happen in the data storage technology in the last few years is the technique of data block deduplication. This is pretty heavy stuff for a computer technology essay, but there is a point. Einstein's view of the predictable universe was shattered by quantum mechanics. Just as Newtonian physics and geometry was broken by Einstein's theory of relativity. Albert Einstein said of quantum mechanics: “I, at any rate, am convinced he does not throw dice” (he meaning god) often paraphrased as “God does not play dice.”This is probably a vision of one of the most profound scientific discontinuities of all time.

- Home

- Services

- About

- News

- Contact

- Autotune for pro tools free download

- Install lexmark z645 printer without cd

- Download game 7 sins ppsspp

- Wireless router for mac laptop

- Mqtt docker tutorial

- How to update your mac to 10-3

- Frontier internet speed test

- Sell your mac back to apple

- Daz studio genesis 2 morphs

- Star wars episode i the phantom menace comic

- V50 vhf radio nmea 2000 network

- Microsoft business contact manager standalone

- Watch poison ivy 2 online megavideo

- Vectorworks viewer 2018

- Zebronics g41 motherboard driver download

- J cole born sinner album all songs mp3 download

- How to download the witcher 3 from disk

- Easy postgresql client windows

- Alan dean foster books online free

- Lync skype for business download free

- English to english dictionary free

- Wireless ps3 controller on mac

- Hash based file deduplication software

- Snap on modis ultra auction

- Uninstall hangouts on mac

- Khaad bengali movie download

- Iphone choose safari or chrome to open

- Microsoft teredo tunneling adapter driver problem windows 7